AI Voice Cloning: Is It Safe and Legal?

Is AI voice cloning safe and legal? Here’s what creators should know about voice cloning tools like ElevenLabs, Murf.ai, and RecCloud in 2026.

AI voice cloning is one of the most fascinating developments in artificial intelligence.

With just a short audio sample, modern AI tools can generate speech that sounds almost identical to a real person. For creators, this opens up huge possibilities: faster content production, consistent narration, and the ability to scale audio content without spending hours recording voiceovers.

But it also raises an important question:

Is AI voice cloning actually safe and legal to use?

The answer depends on how the technology is used. While voice cloning can be incredibly useful for creators, there are ethical and legal boundaries that users need to understand.

What Is AI Voice Cloning?

AI voice cloning is a technology that uses machine learning models to replicate a person’s voice.

Instead of generating generic text-to-speech audio, voice cloning analyzes a real speaker’s tone, cadence, and speech patterns. The AI can then generate new audio that sounds like the original speaker saying words they never actually recorded.

Many modern AI voice generators now include this feature.

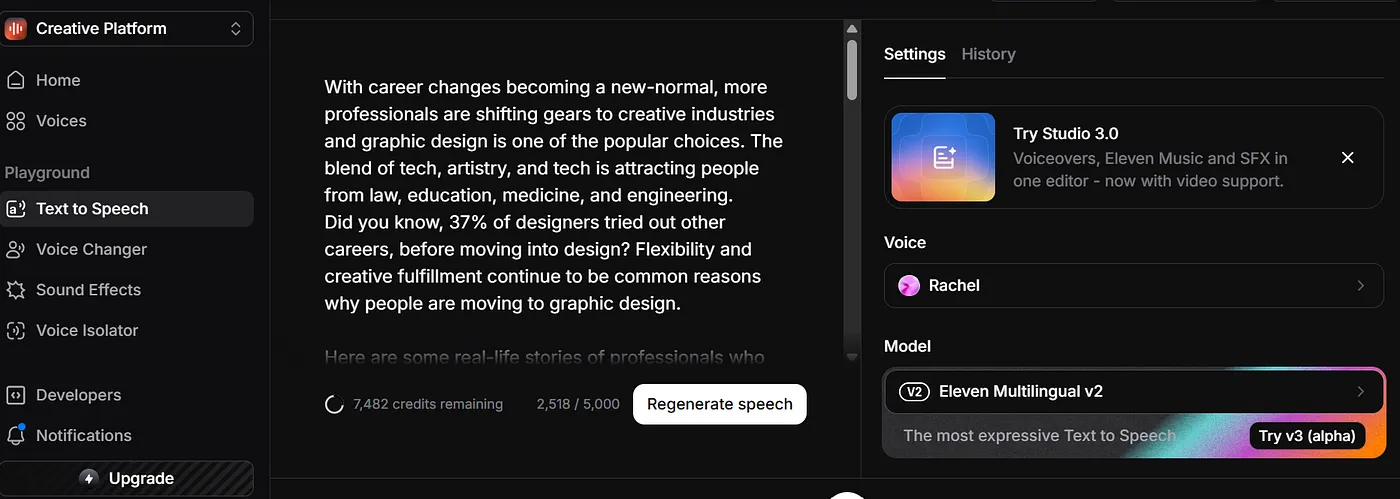

For example, ElevenLabs is widely known for its advanced voice cloning technology. With a short audio clip, users can create a synthetic version of their voice for narration, audiobooks, or podcasts.

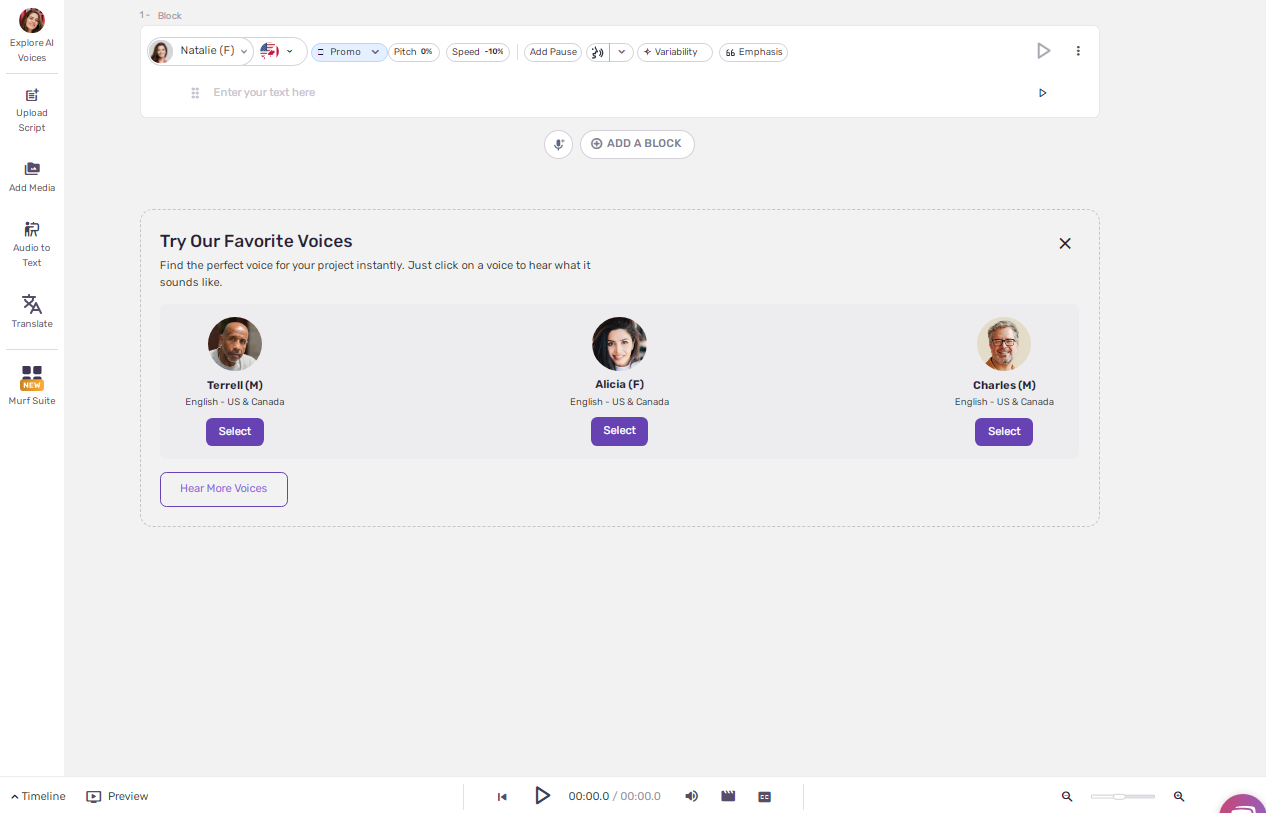

Murf.ai also offers voice cloning features alongside its text-to-speech engine, allowing creators to generate narration in their own voice without recording every script.

Some creator tools even integrate voice cloning into larger production workflows. Platforms like RecCloud, for example, combine voice generation with video, subtitle, and content tools to help creators produce media quickly.

The technology has improved dramatically in the past two years, and in many cases the cloned voice can sound extremely convincing.

Is AI Voice Cloning Legal?

In most cases, AI voice cloning is legal when you are cloning your own voice or have permission from the person whose voice you are using.

For example, it is generally considered acceptable if:

- You clone your own voice for YouTube videos or podcasts

- A voice actor licenses their voice for AI training

- A company creates an AI voice for internal content or marketing

Many platforms have policies requiring users to confirm they have permission before cloning someone’s voice.

Problems arise when voice cloning is used without consent.

Cloning someone’s voice without their permission, especially for impersonation, scams, or misleading content, can lead to serious legal issues.

In several countries, voice likeness is increasingly treated as a protected identity right, similar to image rights or personal likeness.

Safety Concerns Around AI Voice Cloning

While voice cloning is a powerful tool, it also comes with risks.

One of the biggest concerns is voice impersonation. Because AI can reproduce speech patterns convincingly, bad actors could theoretically use cloned voices to imitate someone else.

This is why many voice AI companies have added safeguards such as:

- Identity verification

- Consent requirements

- Watermarking or detection systems

Tools like ElevenLabs, for example, require users to verify that they have permission before cloning a voice. These policies are designed to prevent misuse while still allowing creators to use the technology responsibly.

When Voice Cloning Is Useful for Creators

When used ethically, voice cloning can actually make content creation much easier.

Creators often use voice cloning for:

- YouTube narration

- Podcast production

- Audiobook recording

- Online course creation

Instead of recording every line manually, a creator can generate narration from a script using their cloned voice.

This is especially useful if you produce content frequently or need to update scripts regularly.

For example, a YouTube creator might record a short training sample once using a tool like ElevenLabs or Murf.ai. After that, they can generate narration for new videos without re-recording the entire script.

For busy creators, this can save hours of work.

Ethical Use of AI Voice Cloning

Like many AI technologies, voice cloning is neutral, the impact depends on how it’s used.

Responsible use generally follows a few simple principles:

- Only clone voices with permission

- Avoid impersonating real people without consent

- Disclose AI-generated narration when appropriate

Following these guidelines helps maintain trust with audiences while still benefiting from the efficiency AI provides.

Final Thoughts

AI voice cloning is rapidly becoming part of the creator toolkit.

Tools like ElevenLabs, Murf.ai, and RecCloud have made it possible to generate realistic narration with very little effort. For YouTubers, educators, and podcasters, this technology can dramatically reduce production time.

But with that power comes responsibility.

As long as creators use voice cloning ethically, and with proper consent, it can be a powerful and legitimate tool for content creation.

If you're curious how voice cloning compares across different platforms, you can read my full comparison of the best AI voice generators, where I tested more than 25 tools used by creators today.

Affiliate Disclosure: This article contains affiliate links. If you purchase through them, I may earn a small commission at no extra cost to you.